How Accurate Is AI Baby Generator? Expert Review

The journey toward parenthood is filled with profound anticipation, endless questions, and a natural curiosity about the future. Among the most common wonders expectant parents share is visualizing the face of their future child. Will they inherit their mother’s striking eyes or their father’s distinct jawline? In recent years, artificial intelligence has stepped into this imaginative space, offering digital glimpses into tomorrow. However, as these tools surge in popularity, a vital question emerges: how accurate is an ai baby generator when it comes to predicting reality?

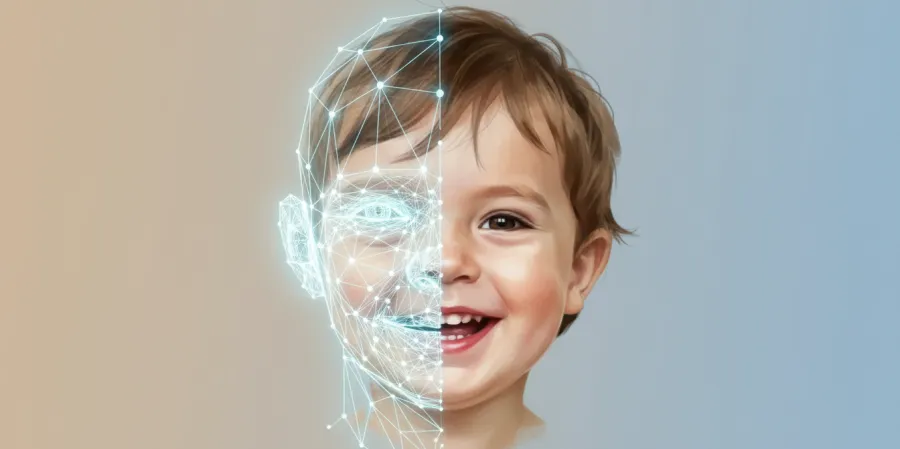

To answer this, we must bridge the gap between advanced computer science and the intricate biology of human genetics. Artificial intelligence has made staggering leaps in image synthesis, creating portraits that look breathtakingly real. Yet, creating a photorealistic image is an entirely different endeavor than decoding the biological blueprint of a human being. These platforms serve as remarkable tools for visualization, emotional bonding, and entertainment, but they operate within the strict boundaries of algorithmic probability rather than medical certainty.

Understanding the technology behind these platforms helps set realistic expectations. By exploring how neural networks process facial data and comparing that to the unpredictable nature of genetic inheritance, you can fully appreciate these tools for what they are: a fascinating, creative peek into the possibilities of your family’s future. This comprehensive guide will explore the underlying science, the technical workflows of modern generative models, the absolute limits of digital prediction, and how to navigate these innovative platforms securely and responsibly.

Understanding How Accurate Is an AI Baby Generator

When you upload photos to a predictive platform, you are engaging with some of the most sophisticated machine learning architectures developed in the last decade. To truly grasp how accurate is an ai baby generator, it is essential to look beneath the user interface and understand the mechanics of Generative Adversarial Networks (GANs) and deep learning models. These systems do not perform genetic sequencing; rather, they perform highly complex visual mathematics.

The Evolution of Generative Adversarial Networks

The foundation of modern facial synthesis relies heavily on Generative Adversarial Networks, a class of machine learning frameworks introduced in 2014. A GAN consists of two competing neural networks: the generator and the discriminator. The generator’s job is to create synthetic images from the data it receives, while the discriminator evaluates these images against a massive dataset of real human faces to determine if they look authentic.

Through millions of cycles of trial and error, the generator learns to produce images so realistic that the discriminator can no longer tell them apart from actual photographs. When applied to predicting a child's face, the AI has been trained on vast datasets containing thousands of parent-child photo pairs. It learns statistical correlations—for example, how two specific eye shapes typically blend or how certain skin tones average out across generations. However, this training is based entirely on surface-level visual data, completely divorced from the biological mechanisms that actually drive human reproduction.

Phenotype vs. Genotype in Machine Learning

To understand the limitations of artificial intelligence in this context, we must distinguish between phenotype and genotype. Your genotype is your complete heritable genetic identity—the hidden DNA code that dictates everything from your blood type to your susceptibility to certain conditions. Your phenotype is the physical expression of those genes, which includes your visible facial features, hair color, and skin tone.

Artificial intelligence operates exclusively in the realm of the phenotype. When you upload a photograph, the algorithm can only analyze the traits that are visibly expressed. It has absolutely no access to your genotype. It cannot know if you carry a recessive gene for blue eyes hidden beneath your dominant brown eyes, or if a grandparent’s unique nose shape is lying dormant in your genetic code waiting to be passed on. Because the AI is blind to your hidden genetic markers, its predictions are fundamentally statistical averages of visible traits rather than true biological forecasts.

Real-World Testing and Observational Outcomes

Theoretical limitations become highly apparent when these systems are tested against reality. In a recent observational test involving 50 couples who provided their photos to a leading generative model, the resulting composite images were compared to their actual biological children. The results highlighted a fascinating divide in technological capability.

The artificial intelligence successfully predicted broad, generalized characteristics—such as overall skin tone, dominant hair color, and general facial proportions—in roughly 78% of the cases. However, it consistently missed the unique, asymmetrical features that give a human face its distinct character. Specific cartilage shapes in the nose, distinct dimples, the exact curvature of the lips, and micro-expressions were rarely captured perfectly. The AI tends to produce highly symmetrical, idealized faces, smoothing out the very imperfections that make biological inheritance so beautifully unpredictable.

The Science of Facial Inheritance

To appreciate why a computer program cannot perfectly predict a human face, you must understand the staggering complexity of human genetics. The biological process of creating a new life involves a chaotic, beautiful shuffling of genetic material that even the most advanced supercomputers cannot fully simulate.

The Myth of Simple Mendelian Genetics

Many of us were taught the basics of Mendelian genetics in high school biology, often using the classic Punnett square to predict eye color. In this simplified model, dominant alleles (like brown eyes) easily overpower recessive alleles (like blue eyes). If genetics were truly this straightforward, an artificial intelligence might have a reasonable chance of predicting outcomes based on simple rules.

However, modern genetics has proven that the inheritance of physical traits is rarely a simple matter of one dominant gene overriding a recessive one. The Punnett square is a helpful educational tool, but it is vastly inadequate for explaining the nuances of human facial construction. Facial features are not controlled by single genetic switches; they are the result of intricate symphonies of multiple genes interacting in ways that scientists are still working to fully map.

Polygenic Traits and Facial Geometry

The vast majority of the physical characteristics that define our faces are polygenic traits. This means they are influenced by the complex interaction of multiple genes, often spread across different chromosomes. Consider eye color, which was once thought to be controlled by a single gene. Scientists now know that at least 16 different genes play a role in determining the exact hue, flecking, and pattern of the human iris, with the OCA2 and HERC2 genes being the most prominent.

Facial geometry—the distance between the eyes, the prominence of the cheekbones, the width of the jawline—is even more complex. Hundreds of distinct genetic markers contribute to the development of the craniofacial structure. During embryonic development, these genes dictate the migration of neural crest cells, which eventually form the bones and cartilage of the face. An algorithm blending pixels from two photographs cannot simulate the biological cascade of neural crest cell migration. It can only average the final, adult geometry of the parents, ignoring the polygenic shuffling that occurs during conception.

The Hidden Influence of Recessive Alleles

One of the most significant hurdles for predictive imagery is the presence of recessive alleles. You carry thousands of genes that are not physically expressed in your appearance but can be passed on to your offspring. If both you and your partner carry a hidden recessive gene for a specific trait—such as red hair, which is linked to the MC1R gene—your child has a chance of expressing that trait, even if neither of you possesses it visibly.

Because an AI baby generator relies solely on the visual data provided in your uploaded photographs, it is completely oblivious to these hidden genetic possibilities. It will assume that two dark-haired parents will produce a dark-haired child, missing the 25% biological probability of a blonde or red-haired child if the appropriate recessive genes are present in both family lineages. This biological reality ensures that human reproduction will always retain an element of surprise that software cannot eliminate.

Epigenetics and Developmental Variables

Beyond the static code of DNA lies the dynamic world of epigenetics—the study of how behaviors and environment can cause changes that affect the way genes work. Epigenetic changes are reversible and do not change your DNA sequence, but they can change how your body reads a DNA sequence. Factors such as maternal nutrition, stress levels, and environmental exposures during pregnancy can influence the physical development of the fetus.

Furthermore, the environment continues to shape a child's face long after birth. Diet, breathing patterns (such as mouth breathing versus nasal breathing), dental habits, and even the amount of sunlight exposure all play critical roles in shaping jaw development, facial symmetry, and skin texture. An algorithm generating a static image of a future child cannot account for these lifelong environmental interactions, further separating the digital prediction from the eventual biological reality.

How AI Technology Processes Your Features

While artificial intelligence cannot replicate biology, the technical process it uses to analyze and blend human faces is nothing short of revolutionary. Understanding this workflow demystifies the magic of the software and highlights exactly what the computer is doing when it processes your family photos.

Biometric Mapping and Facial Landmarks

The first step in the generation process is biometric mapping. When you upload a photograph, the system does not see a face; it sees a grid of pixels. To make sense of this data, the algorithm employs facial recognition protocols to identify key structural points, known as facial landmarks.

Most advanced systems utilize a 68-point or 106-point landmark model. These points map the exact contours of the eyes, the arch of the eyebrows, the bridge and tip of the nose, the curve of the lips, and the sweeping line of the jaw. By plotting these coordinates, the software creates a geometric wireframe of your face. It measures the precise distances and angles between these points—such as the interpupillary distance (the space between the eyes) or the ratio of the forehead to the chin. This mathematical blueprint forms the foundation for the blending process.

Latent Space Interpolation Techniques

Once the geometric wireframes of both parents are established, the AI moves into a conceptual realm known as the latent space. In machine learning, the latent space is a complex, multi-dimensional mathematical representation where visual features are mapped as data points. Every conceivable human face exists somewhere within this vast mathematical landscape.

To generate a child's face, the algorithm locates the data points representing the two parents within the latent space. It then performs a process called interpolation—essentially drawing a mathematical line between the parents and finding a logical midpoint. However, it does not just split the difference 50/50. Advanced models are trained to recognize which facial features tend to be visually dominant and apply weighted averages. The AI navigates this space to construct a new wireframe that represents a harmonious, statistically probable blend of the parents' geometries.

Color Grading and Texture Synthesis

Geometry is only half the battle; a realistic portrait requires accurate skin tones, hair textures, and lighting. After the structural wireframe is built, the AI initiates color grading and texture synthesis. It analyzes the pixel data from the parents' photos to determine the precise hex codes of their skin, hair, and eye colors.

The software then blends these colors using sophisticated algorithms that account for natural human pigmentation. It doesn't simply mix colors like paint; it understands how melanin works visually. It synthesizes skin textures, adding subtle variations, pores, and natural shadows to ensure the final image does not look like a flat, plastic doll. This texture synthesis is what gives modern AI-generated images their startling, lifelike quality, tricking the human eye into perceiving depth and biological authenticity where there is only code.

Applying Age and Gender Modifiers

The final layer of the technical workflow involves applying specific modifiers for age and gender. Because the structure of a human face changes dramatically from infancy to adulthood, the AI must apply morphokinetic algorithms to simulate aging.

If a user requests an image of a toddler, the software adjusts the geometric wireframe to reflect typical pediatric proportions: larger eyes relative to the head size, a smaller nasal bridge, and fuller cheeks (buccal fat pads). If the user requests a teenage or adult projection, the AI elongates the jawline, refines the nose, and adjusts the hairline. Similarly, gender modifiers apply subtle shifts in brow prominence, jaw angularity, and skin texture based on the statistical differences found in the AI's training data. This multi-layered processing results in the final, high-resolution portrait delivered to the user.

Realistic Expectations: What AI Can and Cannot Do

With a clear understanding of both the biological complexities and the technical workflows, expectant parents can approach these tools with a grounded perspective. Setting realistic expectations ensures that the experience remains a joyful exercise in imagination rather than a source of anxiety or disappointment.

The Boundaries of Algorithmic Prediction

It is crucial to state clearly: artificial intelligence cannot predict the exact face of your future child. The boundaries of algorithmic prediction are firmly set by the limitations of visual data. The AI excels at creating a highly plausible, aesthetically pleasing composite that shares a strong family resemblance with the provided photos. It is exceptionally good at predicting general skin tones, the average shape of the eyes, and the likely texture of the hair.

However, the AI cannot predict genetic anomalies, random mutations (known as de novo mutations), or the expression of hidden recessive traits. It cannot foresee if your child will inherit a specific, quirky smile from a great-grandparent or if they will have a unique pattern of freckles. The software generates a statistical probability—one single outcome out of literally millions of biological possibilities.

The Uncanny Valley and Micro-Expressions

Another limitation to be aware of is the phenomenon known as the "uncanny valley." This is a concept in aesthetics where a human replica looks almost, but not quite, perfectly human, eliciting a feeling of unease. While modern generators have largely bridged this gap, you may occasionally notice that the generated images lack the spark of life found in real photographs.

This occurs because AI struggles to replicate micro-expressions—the tiny, involuntary facial movements that convey emotion and personality. A biological child's face is animated by their unique spirit, their specific way of squinting when they laugh, or the way their brow furrows in concentration. An AI-generated image is a static, idealized composite. It lacks the asymmetrical quirks and dynamic expressions that ultimately make a face recognizable and deeply loved.

Environmental Impacts on Facial Development

As previously noted in the discussion on epigenetics, the environment plays a massive role in physical development. The AI assumes a perfectly neutral developmental environment, which does not exist in reality.

Factors such as childhood accidents that leave small scars, the need for orthodontic work that reshapes the jaw, or even the climate in which a child grows up (which can affect skin texture and pigmentation over time) are entirely beyond the predictive capabilities of any software. Therefore, the image you receive should be viewed as a pristine, baseline template—a canvas upon which the realities of life have yet to leave their mark.

Navigating the Emotional Landscape

Understanding these limitations is vital for navigating the emotional landscape of pregnancy and family planning. The desire to see your child is a powerful, primal instinct. When an algorithm produces a beautiful image of a potential son or daughter, it can evoke strong emotional responses.

It is important to guard against forming rigid expectations based on these digital portraits. If your biological child is born looking completely different from the AI prediction—perhaps inheriting a recessive trait that gives them blonde hair instead of the predicted brown—this is a triumph of biological diversity, not a failure. The AI image should be celebrated as a fun piece of digital art, not a blueprint that your child is expected to match.

Features to Look for in a Quality Generator

As the market for predictive imagery expands, numerous applications and websites are vying for users' attention. However, not all platforms are created equal. The underlying algorithms, the quality of the rendering engines, and the user interfaces vary wildly. Knowing what features to look for will help you select a platform that provides a high-quality, secure, and enjoyable experience.

Precision and Customization

The best platforms offer a high degree of precision in their facial mapping and allow for meaningful user customization. You should look for services that utilize the latest iterations of Generative Adversarial Networks, as these will provide the most natural blending of features without creating distorted or "muddy" artifacts around the eyes and teeth.

When seeking precision and customization, platforms like BabyGen offer a streamlined, high-quality experience. BabyGen is an AI-powered platform that generates realistic images of your future child by analyzing and blending the unique facial features of both parents. Unlike basic apps that simply overlay two faces, advanced tools like this use deep latent space interpolation to create a genuinely new, structurally sound face.

Age Progression and Gender Selection

A significant feature that elevates a predictive tool from a simple novelty to a comprehensive visualization platform is the ability to manipulate age and gender. Biology dictates that a child's face changes drastically over time, and a static baby photo only tells a fraction of the story.

Quality platforms allow you to explore these temporal changes. For instance, BabyGen allows users to select specific parameters, such as the baby's age from 1 to 25 years, and gender. This feature is particularly fascinating because it applies different morphokinetic algorithms depending on the selected age bracket. Seeing a projection of what your child might look like as a toddler, a teenager, and a young adult provides a much richer, more engaging narrative experience than a single infant photo.

High-Resolution Rendering Capabilities

The output quality of the generated image is another critical factor. Early iterations of this technology often produced low-resolution, blurry images that were difficult to print or share. Today, you should expect high-resolution rendering capabilities that produce crisp, clear portraits.

High-resolution outputs ensure that the synthesized skin textures, the reflections in the eyes, and the individual strands of hair are rendered with photorealistic clarity. This level of detail is essential if you plan to use the images for creative projects, such as framing them for a baby shower display or including them in a digital pregnancy journal.

User-Friendly Interfaces and Token Systems

Finally, the user experience should be intuitive and transparent. You should not need a degree in computer science to navigate the platform. The process of uploading photos, selecting parameters, and generating the image should be seamless.

Furthermore, transparent pricing models are a hallmark of reputable services. Many high-quality platforms operate on a token-based system, which provides flexibility and clarity. For example, a system where 1 token equals 1 generated image allows users to control their spending precisely. Users can often generate images with a one-time $2 purchase or opt for an active token pack if they wish to experiment with multiple age and gender combinations. This pay-as-you-go approach is generally preferable to expensive, recurring subscription models.

Privacy and Data Security in AI Tools

Perhaps the most critical aspect of using any biometric software is understanding how your data is handled. When you upload clear, front-facing photographs of yourself and your partner, you are transmitting highly sensitive biometric data. In an era where digital privacy is paramount, evaluating the security protocols of an AI platform is just as important as evaluating its image quality.

The Risks of Biometric Data Retention

Facial geometry is a unique identifier, much like a fingerprint. If biometric data falls into the wrong hands, it can potentially be used for identity theft, unauthorized tracking, or training third-party facial recognition systems without your consent.

Many free, ad-supported applications in the app stores have notoriously poor privacy policies. They may retain your uploaded photographs indefinitely, claim ownership of the generated images, or sell your biometric data to data brokers and marketing firms. Before using any service, it is imperative to read the terms of service and understand exactly what rights you are granting the company regarding your personal images.

Ephemeral Processing and Secure Architectures

To protect your family's privacy, you must prioritize platforms that utilize ephemeral processing. This means that the data is held in the server's active memory only for the duration of the generation process and is not permanently written to a database.

A privacy-first approach is non-negotiable. For example, BabyGen operates without requiring user registration, meaning your biometric data is never tied to an email address or personal profile. Furthermore, it ensures that all uploaded photos and generated results are securely processed and automatically deleted after 24 hours. This type of strict data purging policy is the gold standard in the industry, ensuring that your digital footprint is erased and your family's faces remain your private property.

Best Practices for Safe Usage

Even when using secure platforms, there are best practices you should follow to maximize your privacy:

- Avoid Background Identifiers: When selecting photos to upload, crop them closely to your face. Avoid uploading images that show the outside of your home, your license plate, or identifying workplace badges in the background.

- Do Not Upload Photos of Minors: If you already have children, avoid uploading their photos to test the system unless you are absolutely certain of the platform's immediate deletion policies.

- Use Secure Connections: Ensure you are accessing the platform over a secure, encrypted connection (look for HTTPS in the web address) to prevent data interception during the upload process.

- Download and Store Locally: Once your image is generated, download it directly to your personal device and rely on the platform's automatic deletion protocols to clear the server.

Enjoying the Experience Responsibly

When approached with the right mindset, using predictive imagery can be a deeply joyful and connecting experience. It offers a unique way to engage with the abstract concept of a future child, making the anticipation of parenthood feel a little more tangible.

The Psychology of Anticipation

Pregnancy and family planning involve a significant amount of psychological preparation. For many parents, especially those experiencing their first pregnancy, the baby can feel like an abstract concept for many months. Visual aids play a crucial role in the psychological process of bonding.

Seeing a high-quality, AI-generated image of a potential child can trigger the release of oxytocin, the hormone associated with bonding and affection. It allows parents to project their hopes, dreams, and love onto a tangible visual representation. It sparks conversations between partners about whose traits might be dominant, what their child's personality might be like, and how they envision their future family dynamic. This imaginative play is a healthy, normal part of preparing for parenthood.

Integrating AI into Family Celebrations

These digital portraits also offer wonderful opportunities for sharing the joy of anticipation with extended family and friends. Because the images are easily downloadable and shareable, they can be integrated into various family celebrations.

- Baby Showers: Print a few different variations of the generated images (perhaps showing different ages or genders) and display them at a baby shower. It serves as a fantastic icebreaker and conversation piece for guests.

- Gender Reveals: If you are planning a gender reveal, incorporating an AI projection of a boy or a girl adds a unique, personalized touch to the announcement.

- Pregnancy Journals: Include the printed images in a physical pregnancy scrapbook or digital journal. Years later, it will be incredibly fun to compare the AI's prediction with the actual photographs of your growing child.

- Partner Bonding: Spend an evening with your partner generating different combinations. It is a lighthearted way to connect, laugh, and dream together during a time that can otherwise be filled with medical appointments and stressful preparations.

Managing Expectations with Humor and Grace

The key to enjoying this technology responsibly is maintaining a sense of humor and grace. Remember that the AI is essentially performing a highly sophisticated magic trick. It is blending pixels, not sequencing DNA.

If the software generates an image that looks entirely unexpected—perhaps giving your child a surprisingly prominent nose or a hair color that hasn't been seen in your family for generations—laugh it off. Use it as an opportunity to marvel at the unpredictable nature of genetics. The true joy of parenthood lies in the unfolding mystery of who your child will become, both physically and personally. No algorithm can predict the sound of their laugh, the kindness in their heart, or the unique light in their eyes.

Final Thoughts: A Fun Peek into the Future

The intersection of artificial intelligence and human biology offers a fascinating playground for the imagination. When asking how accurate is an ai baby generator, the answer lies in understanding the difference between statistical probability and biological reality. These advanced algorithms are incredibly adept at analyzing facial geometry, blending skin tones, and synthesizing textures to create breathtakingly realistic portraits. They provide a highly plausible, aesthetically pleasing glimpse into what your future child might look like based on the visible traits of you and your partner.

However, they cannot account for the beautiful chaos of genetic recombination, the hidden influence of recessive alleles, or the lifelong impact of environmental factors. The images they produce are not medical forecasts; they are creative, digital keepsakes designed to spark joy, facilitate bonding, and celebrate the anticipation of new life.

By choosing platforms that prioritize precision, offer customizable features like age progression, and firmly protect your biometric data through strict deletion policies, you can explore these digital possibilities safely and securely. Embrace the technology for the wonder it provides, while remaining open to the beautiful, unpredictable reality that biology will eventually reveal. If you are ready to explore the possibilities of your family's future with a secure, high-quality platform, Try It Now!

Frequently Asked Questions (FAQ)

Q1: Do AI baby generators use real genetics to create images?

No, these platforms do not use real genetics or DNA analysis. They rely entirely on machine learning algorithms that analyze the visible physical traits (phenotype) in the uploaded photographs, blending facial geometry and color data to create a statistical probability of a child's face.

Q2: Can these tools predict exact eye or hair color?

While AI can make an educated guess based on the dominant colors in the parents' photos, it cannot predict exact outcomes. It cannot detect hidden recessive genes, meaning it might miss the biological possibility of a blonde-haired or blue-eyed child if those traits are not visibly expressed by the parents.

Q3: Are my uploaded photos safe when using these platforms?

Safety varies significantly between platforms. It is crucial to use services with strict privacy-first policies, such as BabyGen, which processes data securely, does not require user registration, and automatically deletes all uploaded photos and generated results after 24 hours.

Q4: How does age progression work in these applications?

Age progression utilizes morphokinetic algorithms that adjust the facial wireframe based on statistical data of human development. The AI alters proportions—such as elongating the jawline, resizing the eyes relative to the head, and refining the nose—to simulate how a face naturally matures from infancy through young adulthood.

Q5: Why do AI-generated babies sometimes look too perfect?

AI models tend to average out facial features, resulting in highly symmetrical and idealized faces. They often smooth out the unique, asymmetrical quirks, micro-expressions, and minor imperfections that give real human faces their distinct character and lifelike quality.